Measuring What Matters: Steering Innovation and Success

Of all the business books, "Measure What Matters" is one of my favourites. Its concept is simple: set clear objectives that drive an outcome and have measurable key results that align your efforts, organization, and stakeholders. Focus on what truly matters, and track your progress over time. It's not rocket science; it's pretty simple. It fosters a culture of transparency and accountability, helping everyone from startups to enterprise organizations achieve their mission and vision, drive continuous improvement in growth, and set their innovation up for success.

In day-to-day practice, it's often not as easy as I described above. But this is why we have to be very conscious, careful, and mindful about selecting meaningful metrics and measurements to drive our innovation rather than just selecting objectives and metrics to have something to check off a to-do list.

Test to Impress: Unit Testing Principles

Unit testing is one of the most essential parts of building robust software. However, it isn't just about writing tests for the sake of tests; it's about crafting practical tests that efficiently ensure your software's quality, scalability, and reliability. This is where we need to have meaningful measurements: to evaluate the quality of our tests and our approach to see if they are setting us up for success, driving improvements in our code quality and product reliability.

The nuance of knowing what to test and how to test it is key when architecting a proper testing approach. Ideally, we aim for minimum viable testing because the more code we write, the more we have to support and maintain. By focusing on behaviour over implementation, we can target the heart of our software—mainly the value proposition and the user stories—without overwhelming our developers with overzealous testing. This leads to a better developer experience, a better overall product, and a more agile framework that allows us to deliver value to our end users.

Code Review: Navigating the Bottlenecks

Code reviews were some of my pivotal moments as a junior engineer, where I learned the most from my mentors. However, a lot of the time, they were some of the most cringe-worthy, embarrassing, and harsh events of my early career. Not only that, but there were a lot of gatekeepers who focused entirely on the wrong things during the review process, turning it into a process that was dull, tedious, and brought little value to the team or our end users. This made it something everyone dreaded, leading to a worse developer experience and a slower, less agile process and delivery.

This is where we need to take a step back and take a meta-approach, understanding our code review processes and the bottlenecks that stall innovation by delaying the delivery of new features and the release of value to our customers.

The primary goal of any engineering team is to create solutions to real-world problems based on user stories and pain points. If we have artificial processes that slow this development cycle, we're not doing ourselves any favours and are not doing our job as engineers delivering solutions. Our customers become unsatisfied, and we slow our pace of learning.

If we want to understand and measure how well we're doing as engineering teams, we need to take a step back and measure our actual processes. Ensuring they're setting us up for success will expedite value delivery to the world.

Engineer Excellence: 7 Habits for Software Success

Sometimes, being an expert just means doing simple things well, and it all starts with the importance of setting clear goals, measuring progress, and continually iterating and improving. This sets you up for success, sets your team up for success, and aligns the expectations of your stakeholders so that your organization is also set up for success.

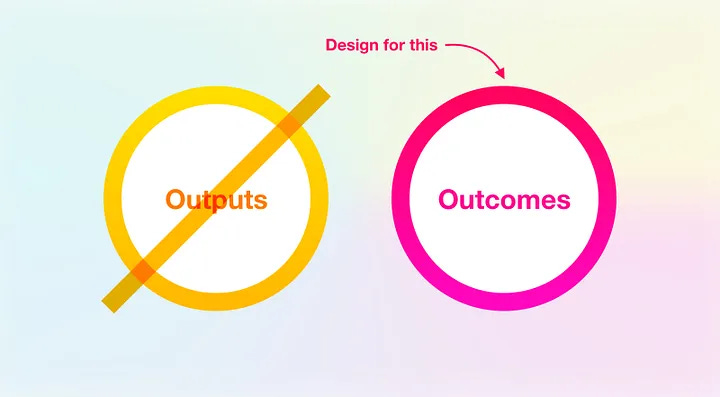

At the end of the day, the implementation details and processes don't matter. What really matters is if you're an engineer who can deliver value and outcomes to the customer.

Hardware Hurdles: From Prototype to Production

Hardware is complicated, and we need to be very conscious of the steps we take and our approach to design through the development lifecycle as we move from prototype to production. Unlike in software, we can't simply hit F9 and recompile, so we need to focus on setting clear objectives, monitoring our progress, and having clear 'go/no-go' criteria where we can pull the plug early and pivot early if needed. This way, we can make data-driven decisions to ensure we build the right product.

Ship It: AI Deployment and Real-World Impact

Just deploying the product isn't enough; that's simply an output, not an outcome. We need to measure how our products and designs interact with the real world and how our customers interact with our products.

It's crucial to verify if they find the value they need or want and if we've properly targeted their user stories and pain points. This is where we need to create tangible metrics that measure real-world value and interactions, especially with AI products. This data-driven approach enables us to learn and iterate, continuously enhancing the product's value proposition based on real-world data.

Crafting for Meaningful Outcomes

As I grow further in my career, I've realized more and more that it's less about the technical challenges and more about product-market fit and focusing on your customer needs and the outcomes that set them up for success. If you can achieve this, you set yourself and your venture up for success by focusing on meaningful business results that deliver real-world value. The technology is just an implementation detail; the solution and value you provide to your customers are the real essence of innovation.

But you need some way of measuring customer satisfaction and business impact to drive meaningful design innovation. It can be as simple as just talking to your customers and being there, not only early in the discovery phase but later on, at the end, through their whole journey and beyond. This ties nicely with standard business practices and planning like KPIs and OKRs, where you have to think about not having just metrics for the sake of metrics but making sure that those measurements, metrics, targets, goals, and objectives are defined in such a way that they measure the tangible outcome to drive valuable user experiences and innovation, not just for the sake of checking off a to-do list.

Productivity: Where McKinsey Missed the Mark

McKinsey's method of measuring developer productivity is flawed, yet it underscores the desire to find a way to measure productivity without hindering it. By setting aside the common micromanagement issues that often plague productivity measurement frameworks and focusing more on the principle that measuring the right aspects can lead to improvement and growth, we start to get an idea of what we need.

It often comes back to the core ideals of the Agile Manifesto, which emphasizes people over processes. This "people" aspect extends to your end customers—understanding their user stories, needs, and pain points—and your internal engineers and development teams, gaining insight into their experiences throughout the project journey. This is where McKinsey largely misses the mark: they focus mainly on measuring effort and raw output, overlooking the more crucial aspects of outcomes and impact, which are fundamental in engineering and delivering viable solutions to the world.